AI startup Anthropic has unveiled its latest conversational model, Claude 2.1, touting new features aimed at improving enterprise applications. The release doubles Claude's context length limit to 200,000 tokens and reduces false statement rates by 50%.

Some key highlights of Claude 2.1 include:

- Reduced hallucination and greater reliability via improved honesty

- Expanded context window, unlocking new use cases like longer-form content and RAG

- Early access tool use and function calling for greater flexibility and extended capabilities

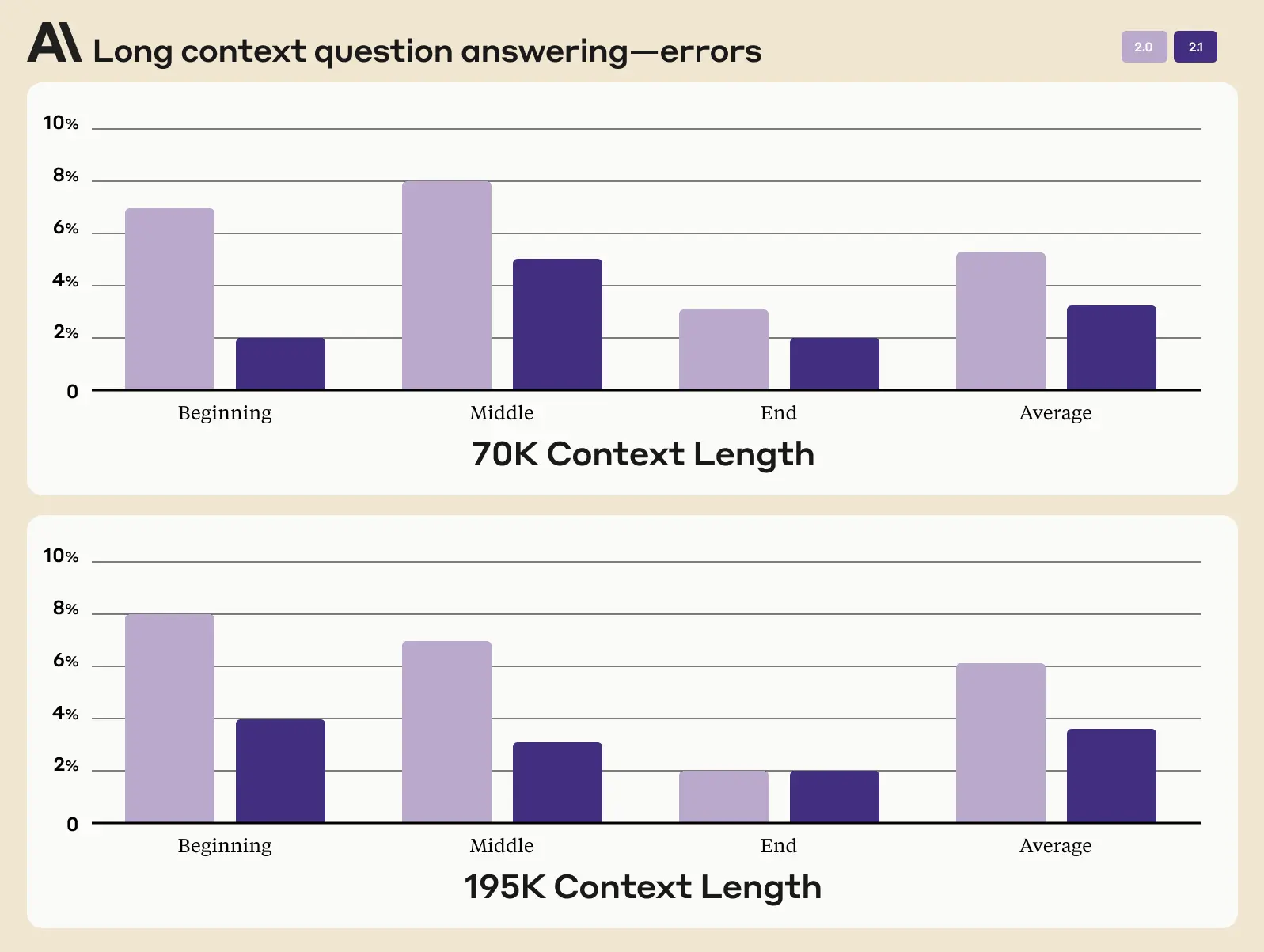

Claude 2.1 represents Anthropic's ongoing efforts to balance cutting-edge AI capabilities with safety and accuracy. The updated model can now process documents up to 150,000 words in length. This equates to over 500 pages of material such as technical documentation, financial statements, or even literary works.

"Our users can now upload entire codebases, S-1s, or even long literary works like The Iliad or The Odyssey," the company explained in a blog post. "By being able to talk to large bodies of content or data, Claude can summarize, perform Q&A, forecast trends, compare and contrast multiple documents, and much more."

Processing 200,000 tokens is an industry first and complex feat, with tasks potentially taking Claude minutes versus hours of human effort. Anthropic expects latency to improve substantially as the technology matures.

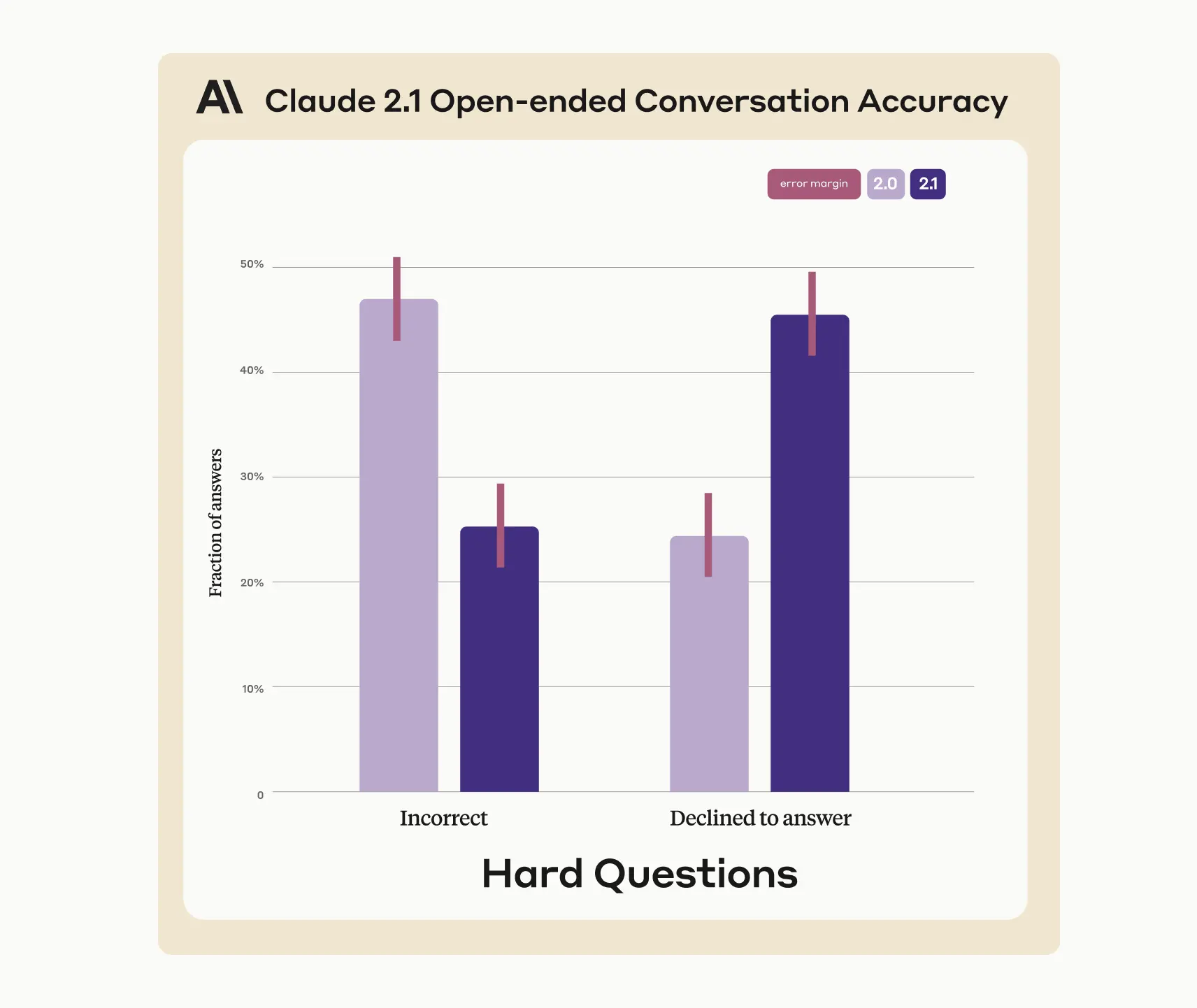

Testing indicates Claude 2.1's rate of hallucination or false claims dropped by half compared to the previous Claude 2.0. The company curated factual questions targeting areas where AI models often falter, with Claude 2.1 showing substantially higher rates of admitting uncertainty rather than providing incorrect information.

The updated model also demonstrated meaningful comprehension and summarization gains, particularly for lengthy, complex documents like contracts, financial reports and technical specifications that demand high accuracy. Anthropic recorded a 30% decrease in wrong answers and 3-4 times fewer instances of Claude 2.1 falsely concluding a document supports a certain claim.

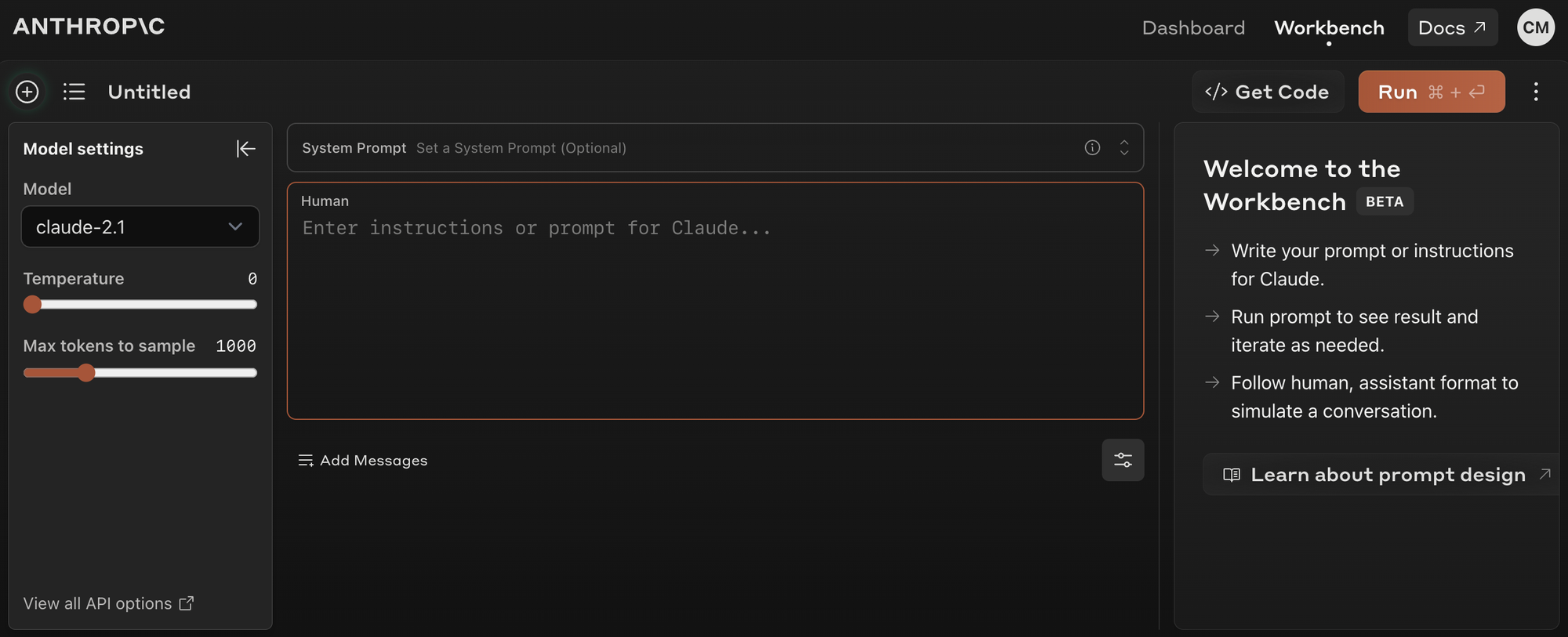

Revamped Developer Experience and New System Prompts

Anthropic has streamlined the developer experience for Claude 2.1's API. The new Workbench product allows for prompt iteration in a playground-style environment, along with new model settings for optimized behavior. Additionally, the introduction of system prompts enables users to set specific instructions, allowing Claude to adopt particular personalities or roles and provide responses tailored to user needs.

Introducing API Tool Use

Claude 2.1 also introduces a beta tool use feature allowing integration with existing systems and data sources. Early adopters can build applications leveraging Claude's language capabilities to parse natural language requests into API calls, search private databases or take simple actions through software. Example use cases include:

- Using a calculator for complex numerical reasoning

- Translating requests into structured API calls

- Answering questions by searching databases or using a web search API

- Taking simple actions in software via private APIs

- Connecting to product datasets to make recommendations and help users complete purchases

The updated model is available now via Anthropic's API and powers the claude.ai website. Free users can access core features, while paying customers unlock the full 200,000 token context window for large document analysis.