At re:Invent 2023, AWS CEO Adam Selipsky and NVIDIA CEO Jensen Huang highlighted the pivotal role of generative AI in cloud transformation. They spotlighted their companies' growing partnership to bring the latest innovations to enterprises worldwide and empower customer success.

Combining AI and cloud computing expertise, NVIDIA founder and CEO Jensen Huang joined Selipsky on stage Tuesday to announce expansions of the AWS-NVIDIA collaboration. This includes more offerings that will deliver advanced graphics, machine learning and generative AI infrastructure to fuel next-generation applications.

A centerpiece of the announcement is AWS becoming the first major cloud provider to offer NVIDIA's GH200 Grace Hopper Superchips. As Huang explained, the GH200 uniquely connects NVIDIA's new Arm-based Grace CPU with its advanced Hopper GPU using the high-speed NVLink interconnect.

When clustered together into an NVL32 configuration spanning 32 GH200 nodes, the platform provides astonishing processing power - up to 4 petaflops per superchip. It also enables terabyte-scale memory to handle the massive datasets needed for modern AI workloads.

By leveraging other AWS innovations like Elastic Fabric Adapter (EFA) networking, Nitro hypervisors and EC2 UltraClusters, customers can interconnect thousands of GH200 chips into a flexible cloud-based supercomputer specialized for AI.

As part of the collaboration, AWS will host NVIDIA DGX Cloud, delivering DGX as a managed service. DGX Cloud removes infrastructure management burdens so developers can focus on training models.

DGX Cloud instances on AWS will be the first to feature GH200 NVL32 configurations. This will help accelerate training for next-generation models exceeding one trillion parameters.

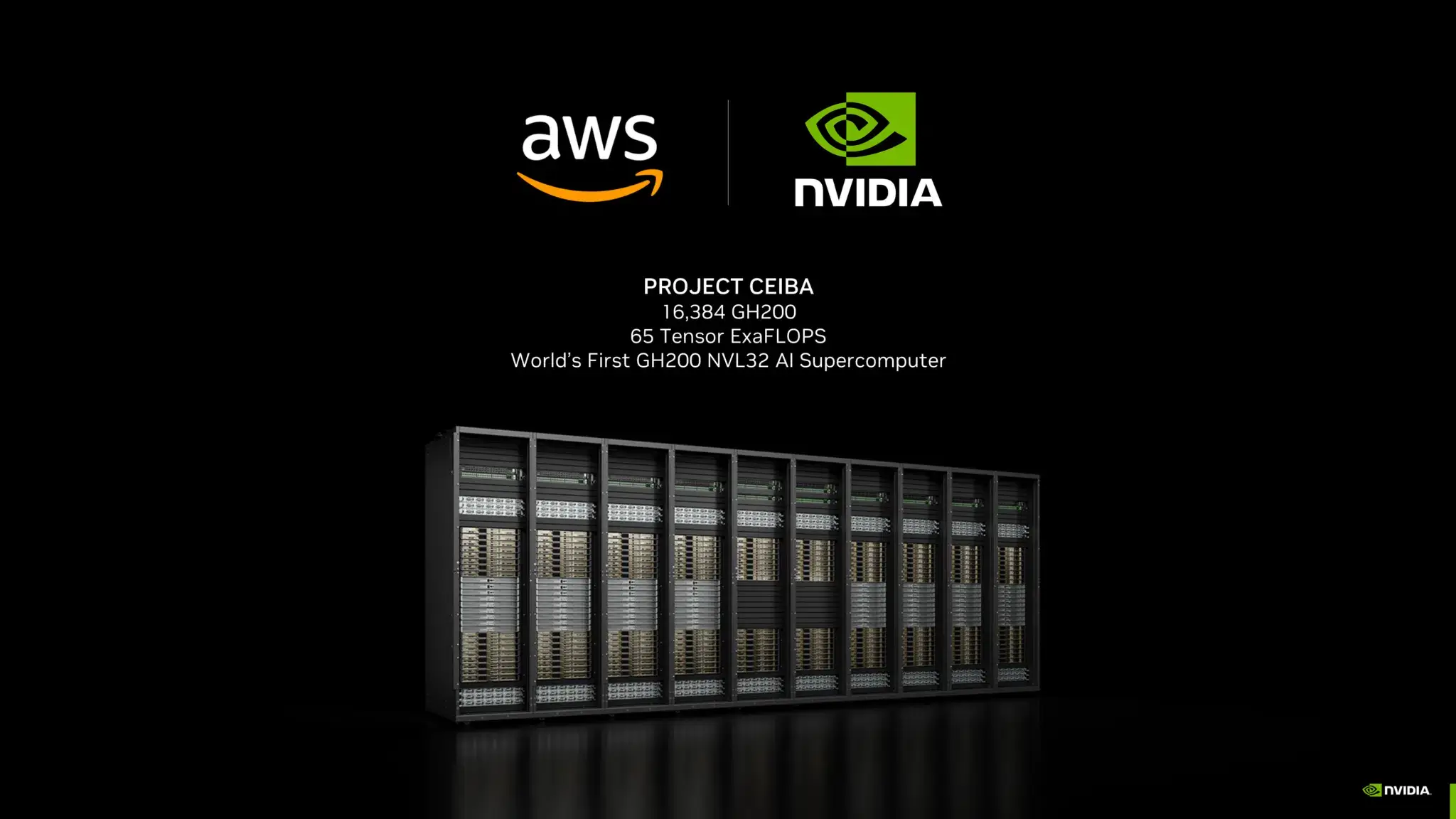

In perhaps their most ambitious project, AWS and NVIDIA are collaborating on ‘Project Ceiba’ to build what they deemed the world's fastest GPU-powered AI supercomputer.

Project Ceiba, named after the majestic Amazonian Ceiba tree, will comprise over 16,384 NVIDIA GH200 nodes interconnected using AWS's EFA networking. NVIDIA intends to use the 65 exaflop supercomputer for its own AI research and development.

As Huang highlighted, NVIDIA will utilize Project Ceiba for its own expansive AI research spanning digital graphics, robotics, climate science, drug discovery and much more. The extreme scale will enable breakthrough innovations in generative AI approaches.

The partners are also introducing Amazon EC2 instances to make cutting-edge AI hardware easily accessible for a broader range of customers:

- P5e Instances harnessing NVIDIA's latest H200 GPUs for large generative models and HPC workloads

- G6/G6e Instances featuring new NVIDIA L4 and L40S GPUs for more affordable AI training/inference

With new hardware and services in areas like AI infrastructure, software libraries, and foundation models, AWS and NVIDIA aim to jointly drive the next phase of generative AI adoption.