Intel today unveiled its latest data center processors, the 5th Generation Xeon Scalable lineup (code-named Emerald Rapids), delivering significant leaps in AI acceleration, security, and performance efficiency. The new chips aim to cement Intel’s leadership in the lucrative data center CPU market.

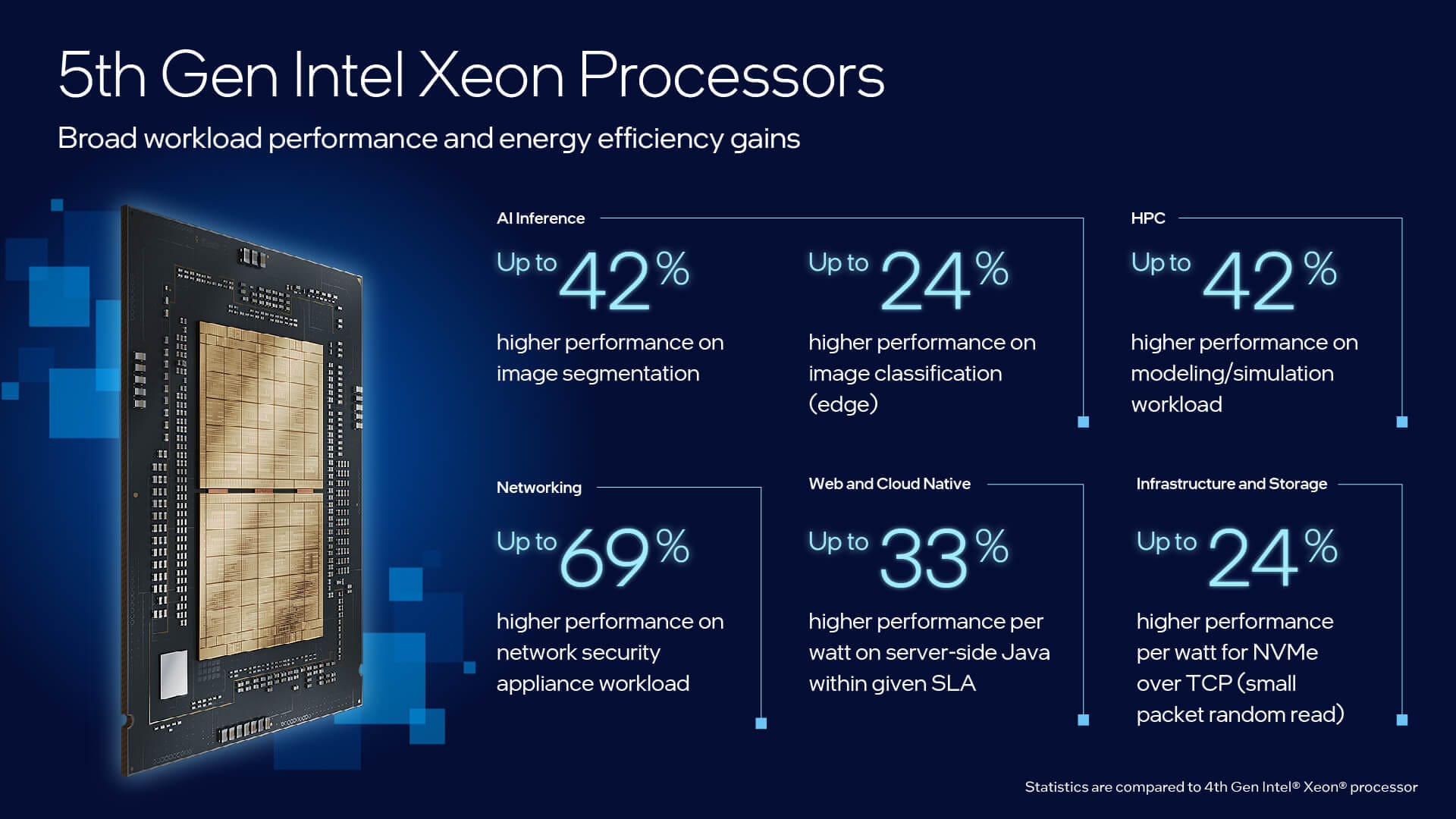

Most notably, the 5th Gen Xeon incorporates AI acceleration into every processor core, enabling up to 42% faster inference speeds on large neural networks compared to prior generations. This effectively eliminates the need for add-on AI accelerators for many workloads. The beefed-up on-chip AI capabilities unlock new possibilities for advanced AI adoption across cloud, network, and edge environments.

Beyond AI, the new Xeons deliver a 21% average performance boost over 4th Gen chips for general compute-intensive workloads, Intel stated. They also provide 36% higher performance per watt, leading to major efficiency gains. For data centers upgrading from older-generation Xeon CPUs, total cost of ownership could potentially be reduced by up to 77% with the new offerings.

Under the hood, the 5th Gen Xeons scale up to 64 CPU cores per processor and include nearly triple the L3 cache available previously. Support for speedy DDR5 memory and introduces the new Intel UPI 2.0 interconnect, enabling up to 20 gigatransfers per second between sockets to remove bottlenecks.

The processors are drop-in compatible with existing Xeon-powered data centers, allowing rapid deployments. Major server OEMs like Dell and Lenovo plan to launch Emerald Rapids-based offerings in Q1 2024. Top cloud providers will also roll out the new chips across their infrastructure.

Moreover, the new Xeons incorporate Intel’s Trust Domain Extensions (Intel TDX) for heightened data security and privacy safeguards at the virtual machine level. TDX allows cloud and data center operators to offer confidential computing services, protecting sensitive customer data and workloads.

In customer validation, IBM saw up to 2.7X higher database query throughput with the 5th Gen chips. Google Cloud observed a 2X acceleration in threat detection for Palo Alto Networks workloads leveraging the on-chip AI. Gaming firm Gallium Studios improved real-time game inference by 6.5X using the new Xeons, saving on cost and latency.

Intel is betting big on its 5th Generation Xeons to extend its data center leadership. By aggressively infusing more AI performance and efficiency while retaining backwards compatibility, Intel aims to provide existing customers an attractive upgrade path while priming its infrastructure for the burgeoning AI-centric workloads of the future.