Meta AI has unveiled a new artificial intelligence system called SeamlessM4T that can translate between nearly 100 spoken and written languages. The model represents a major advance towards the goal of creating a universal translator.

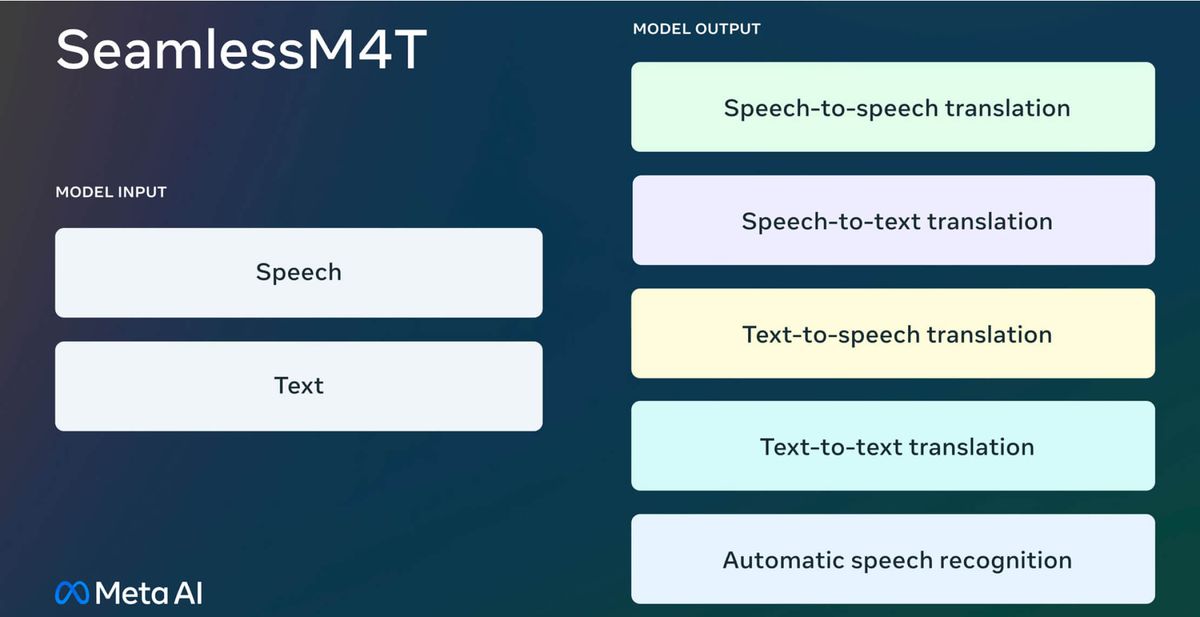

Inspired by the fictional Babel Fish from The Hitchhiker’s Guide to the Galaxy, SeamlessM4T represents Meta's endeavor to create a unified multilingual system capable of catering to all language translation needs. It is the first single AI system capable of translating seamlessly between speech and text across languages. It can perform speech-to-speech, speech-to-text, text-to-speech and text-to-text translation, as well as automatic speech recognition, all within one unified model architecture. Specifically, the model's features include:

- Automatic speech recognition across almost 100 languages.

- Seamless translation capabilities between nearly 100 input-output language pairs for both speech-to-text and text-to-text translations.

- Speech-to-speech translation available for close to 100 input languages, translating into 36 output languages, including English.

- Text-to-speech translation that covers almost 100 input languages and 36 output languages.

While speech-to-text and speech-to-speech systems exist, they often only cater to a limited range of languages, and relied on multiple cascaded subsystems to divide the process into stages. This limited scalability and performance and presented a challenge in achieving seamless translation. SeamlessM4T overcomes these issues through an end-to-end approach, leading to higher quality translation, especially for lower resource languages.

Meta’s previous efforts in this field include releasing No Language Left Behind (NLLB) for text-based translations across 200 languages and the Universal Speech Translator, the first AI-powered translation for a primarily oral language. SeamlessM4T amalgamates insights from these initiatives and more to craft a holistic translation experience.

To train a multilingual speech translation model like SeamlessM4T, massive amounts of aligned speech and text data are needed. However, only relying on human transcribed and translated speech does not scale to tackle the challenging task of speech translation for 100 languages.

To solve this, Meta built SONAR (Sentence-level mOdality- and laNganguage-Agnostic Representations), a new multilingual and -modal text embedding space covering 200 languages. Then, using a teacher-student approach, they extended SONAR to speech with coverage of 36 languages. Meta mined tens of billions of sentences and 4 million hours of speech from public repositories across the web. In total, they automatically aligned over 470,000 hours of speech and text translations. This corpus, called SeamlessAlign, is the largest open speech/speech and speech/text parallel corpus in terms of total volume and language coverage to date.

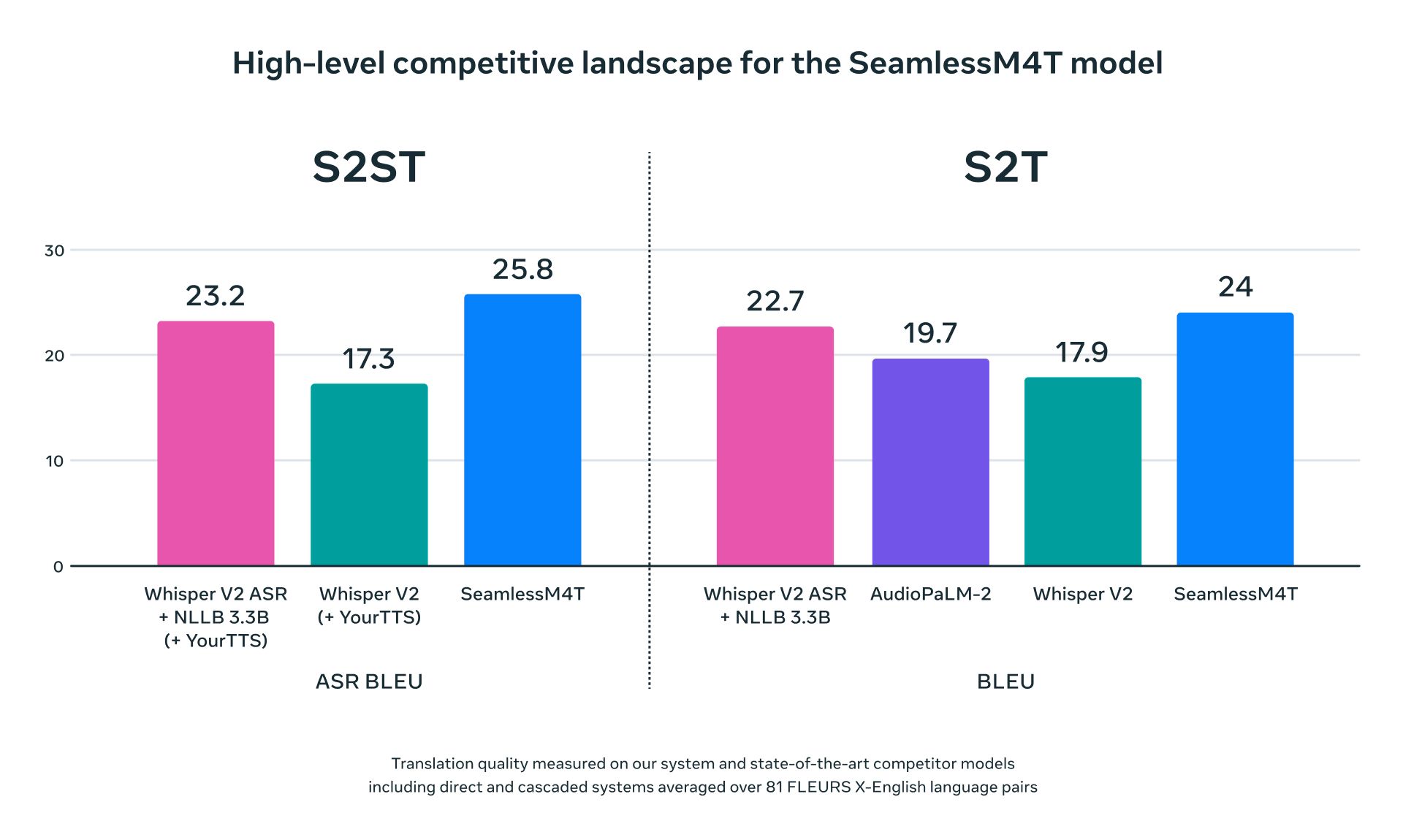

Combined with other labeled and pseudo-labeled data, SeamlessM4T was trained to translate to and from English for both speech and text across languages. The results speaks volumes. The system achieves state-of-the-art results, outperforming previous methods. Just try the demo for yourself.

SeamlessM4T has set new benchmarks by providing state-of-the-art translations for nearly 100 languages. Especially impressive is its enhanced performance in low and mid-resource languages, alongside maintaining excellence in dominant languages like English, Spanish, and German.

The model's efficacy isn't limited to textual metrics. With BLASER 2.0, evaluations showcase that SeamlessM4T performs remarkably well against background noises and speaker variations, outshining current top-tier models.

A pivotal aspect of Meta's approach is the commitment to responsible AI. Comprehensive research on bias and toxicity was undertaken to fine-tune the model. Efforts include the extension of a multilingual toxicity classifier to speech and the development of tools to quantify gender bias across numerous translation paths.

SeamlessM4T is being released under CC BY-NC 4.0, making it accessible to researchers and developers.