Microsoft has announced the launch of text-to-speech avatars on its Azure AI platform, allowing businesses to create personalized digital humans powered by natural language AI. The new avatars enable photorealistic avatar videos and interactive experiences directly from text input. Users can build custom virtual assistants, training videos, digital doubles, and more.

Unveiled this week at Microsoft Ignite, the avatars combine neural text-to-speech models with computer vision breakthroughs. Users can upload a few images and video samples of a person to train a custom avatar. The AI system precisely replicates vocal intonations and lip movements to match the individual's voice and likeness with remarkable fidelity.

High-quality video content production traditionally requires significant time and resources. But Microsoft is betting that its text-to-speech avatars will enable faster and more cost-effective creation of dynamic talking digital anchors, brand spokespeople, tutorial instructors, and other virtual personalities.

The avatars also pave the way for more natural conversation experiences with AI assistants. Microsoft showcased an avatar shopping helper able to process queries and execute transactions in real-time using Azure AI search and database capabilities.

Under the hood, the avatar builder leverages robust voice cloning and neural rendering techniques. The system first analyzes input text to generate a phonetic sequence. Azure's state-of-the-art neural text-to-speech model then predicts acoustic features to synthesize the voice with impressive accuracy. Finally, the avatar animation model uses those features to generate photorealistic lip sync and facial expressions.

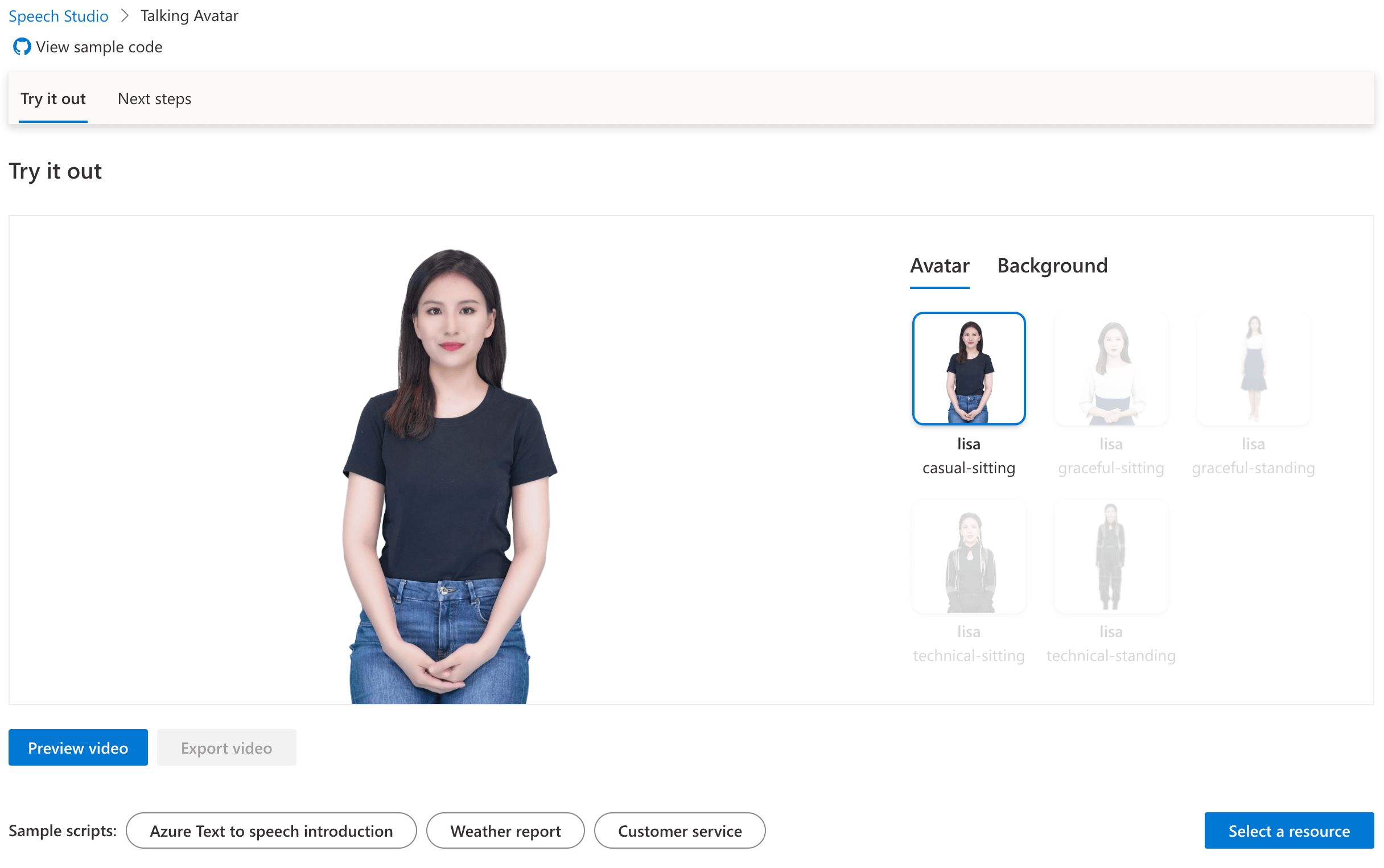

Azure AI Speech offers two variants of this feature: prebuilt and custom text to speech avatars. The prebuilt option provides out-of-the-box avatars in various languages and voices, suitable for creating standard video content and interactive applications. The custom feature, on the other hand, allows for the creation of personalized avatars, using video recordings provided by the customer.

For now, access to build custom avatars remains restricted to mitigate risks like deepfakes. But pre-built avatar options are available to all Azure customers through Speech Studio tools.

Microsoft is not alone in pursuing AI synthetic media - startups like D-ID and Synthesia already power avatar generation at scale. And while pioneering in its own right, Microsoft’s avatar tech lacks many of the capabilities they offer. For example, D-ID and Synthesia allow avatar creation from both text and audio input, versus Microsoft’s text-dependent approach. D-ID also provides accessible mobile apps for easy avatar generation, while Synthesia enables video production at scale through its self-service studio.

Currently, the lip-sync quality of Microsoft's avatars also appears inferior to the seamless realism achieved by Synthesia and D-ID.

However, Microsoft holds competitive advantage in enterprise cloud resources, speech AI research and large language model technology. The tech giant is betting its proficiencies in natural language systems, backed by Azure's computing power, will unlock new use cases and push avatar innovation to the next level.

The AV speech avatars highlight the accelerating convergence of AI, digital identity, and interaction. As underlying natural language systems continue to evolve, expect avatar-driven experiences to permeate our digital lives, from personalized service agents to immersive metaverse encounters. With this latest innovation, Microsoft bets that the future of AI is not just vocal but visually human.