Video conferencing, a technology that has become ubiquitous in modern workplaces and personal communications, has seen a plethora of advancements over the years. From the early days of AT&T's Picturephone to contemporary telepresence systems, the technology has evolved tremendously. Throughout its evolution, the primary aim has remained the same: facilitating a communication experience that's as real as face-to-face interaction.

NVIDIA's latest research pushes this goal further by enabling high-fidelity 3D video conferencing using only consumer webcams and AI-mediated techniques. Their breakthroughs demonstrate new possibilities for accessible and immersive virtual interactions. By replacing expensive 3D capture equipment with efficient neural networks, NVIDIA is bringing lifelike 3D telepresence closer to mainstream adoption.

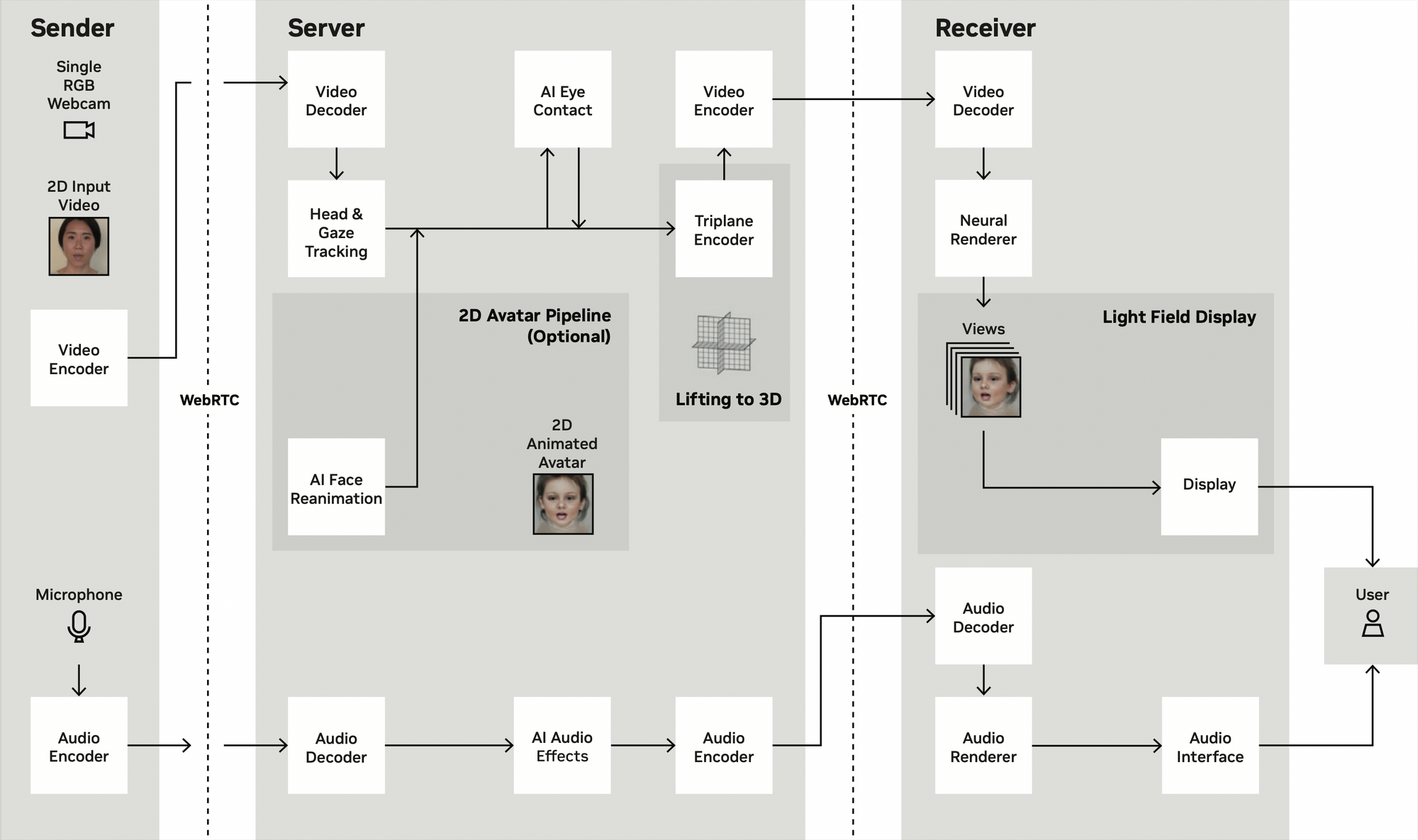

The core innovation presented by NVIDIA researchers at SIGGRAPH 2023 is a novel “3D lifting” method powered by AI. This neural encoder can take a standard 2D video feed from a consumer webcam as input and output a photorealistic 3D neural representation of the subject. The AI-generated 3D avatar can be rendered and viewed from different angles in real-time while requiring only conventional 2D video streaming bandwidth.

While recent advances in volumetric video capture enable compelling 3D conferencing, they rely on complex multi-camera rigs inaccessible to consumers, NVIDIA's AI system achieves the same high-fidelity results using only a webcam.

NVIDIA's approach has several key advantages over traditional 3D conferencing solutions:

- Drastically reduced cost - No specialized cameras needed, only a consumer webcam

- High visual fidelity - Photorealistic 3D avatars, enabled by generative AI

- Flexibility - Support for photorealistic or stylized avatars

- Mutual eye contact - Gaze correction enables natural eye contact

- Lightweight streaming - Transmits only 2D video, decodes 3D on receiver end

The researchers demonstrated a setup that facilitates 2 or 3 person video calls. It lifts the 2D faces into neural radiance fields (NeRFs), and streams and renders them to a Looking Glass 3D display. This allows a personalized, immersive viewing experience without 3D glasses. The infrastructure also integrates spatialized audio powered by NVIDIA Maxine which allows realistic directional sound between participants. The end-to-end system runs in real-time on off-the-shelf NVIDIA RTX GPUs, requiring less than 100 milliseconds for capture, streaming, and render.

NVIDIA's AI approach could bring natural-looking 3D video conferencing closer than ever to mainstream consumer adoption. With further development, the techniques could soon unlock immersive telepresence for virtual meetings, remote work, and long-distance communications.