NVIDIA CEO Jensen Huang has unveiled the company's next-generation GPU architecture, named Blackwell, which promises to significantly advance the field of artificial intelligence. The announcement, made during Nvidia's GPU Technology Conference (GTC) in San Jose, California, highlights the chipmaker's continued dominance in the AI hardware market.

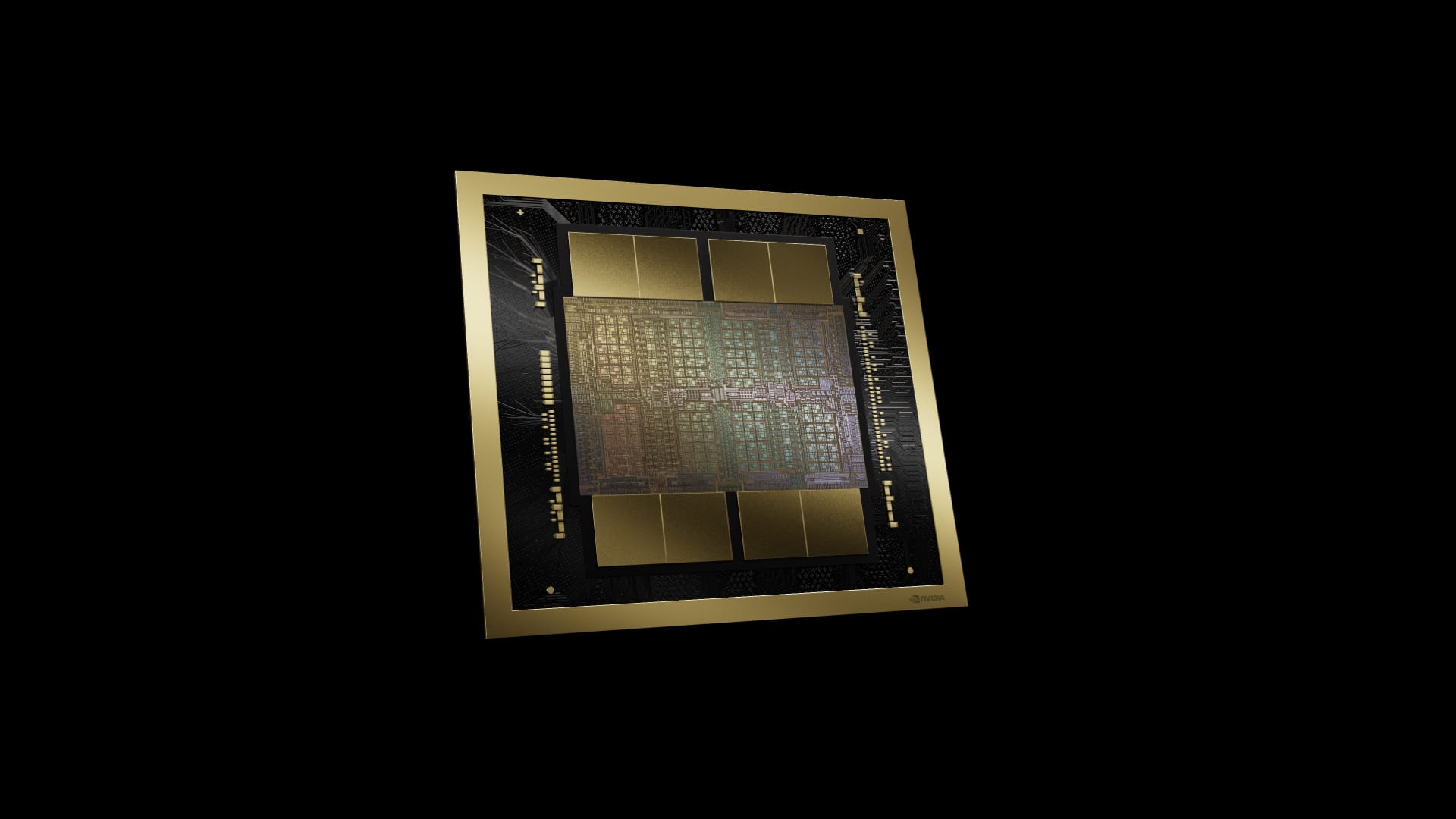

The Blackwell GPU, named after the renowned mathematician and statistician David Harold Blackwell, is set to succeed the highly successful Hopper architecture launched two years ago. Packed with an impressive 208 billion transistors, the Blackwell chip is manufactured using a custom-built 4NP TSMC process, allowing for unparalleled performance and efficiency.

One of the key innovations in the Blackwell architecture is the second-generation Transformer Engine, which enables support for double the compute and model sizes compared to its predecessor. This advancement, coupled with NVIDIA's TensorRT-LLM and NeMo Megatron frameworks, will allow for the development and deployment of trillion-parameter-scale AI models at a fraction of the cost and energy consumption of previous generations.

Major tech giants, including Amazon Web Services, Dell Technologies, Google, Meta, Microsoft, OpenAI, Oracle, Tesla, and xAI, are expected to adopt the Blackwell platform, further solidifying NVIDIA's position as the go-to provider for AI hardware. These companies have expressed their enthusiasm for the breakthrough capabilities of the Blackwell GPU, which will enable them to accelerate discoveries and drive innovation across various industries.

The Blackwell architecture also introduces several other groundbreaking technologies, such as the fifth-generation NVLink, which delivers a staggering 1.8TB/s bidirectional throughput per GPU, ensuring seamless communication among hundreds of GPUs for the most complex large language models (LLMs). Additionally, the RAS Engine and advanced confidential computing capabilities enhance system uptime, resiliency, and data protection without compromising performance.

NVIDIA's commitment to accelerating data processing is evident in the inclusion of a dedicated decompression engine, which supports the latest formats and accelerates database queries. This development is particularly significant, as data processing is expected to become increasingly GPU-accelerated in the coming years.

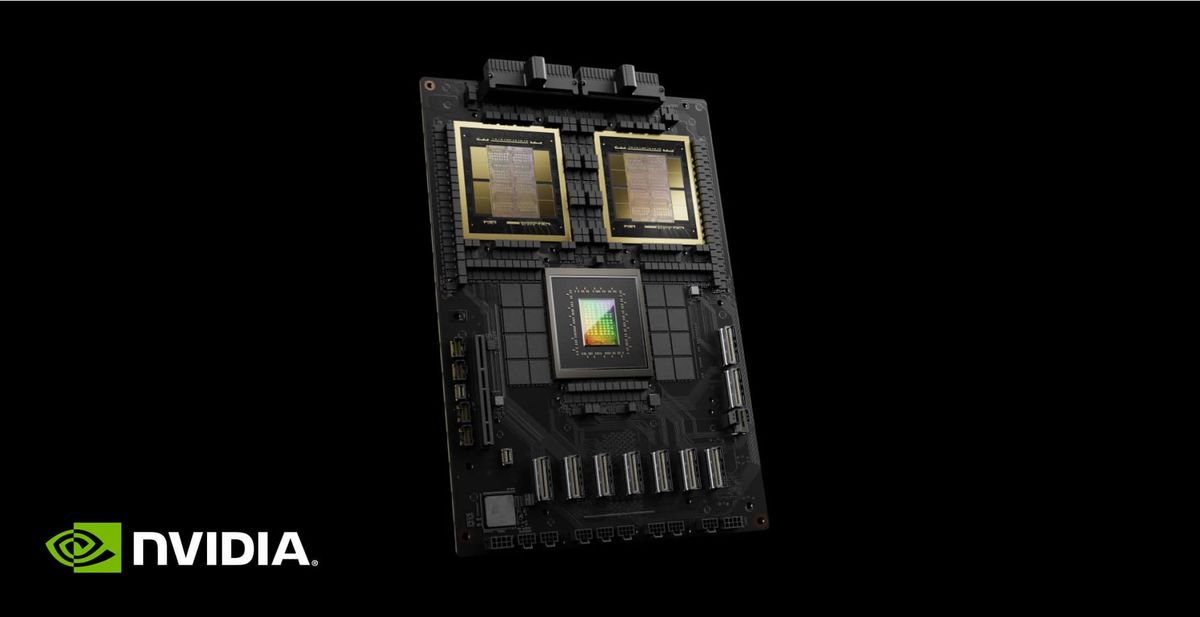

The GB200 Grace Blackwell Superchip, a multi-node, liquid-cooled, rack-scale system, combines 36 Grace Blackwell Superchips, providing up to a 30x performance increase compared to the same number of NVIDIA H100 Tensor Core GPUs for LLM inference workloads. This platform acts as a single GPU with 1.4 exaflops of AI performance and 30TB of fast memory, serving as a building block for the newest DGX SuperPOD.

Blackwell-based products will be available from a wide range of partners, including cloud service providers, server manufacturers, and software makers, starting later this year. This global network of partners will enable organizations across various industries to leverage the power of Blackwell for applications such as generative AI, engineering simulation, electronic design automation, and computer-aided drug design.

As Huang stated during his keynote, "Generative AI is the defining technology of our time. Blackwell is the engine to power this new industrial revolution." With the introduction of the Blackwell GPU, Nvidia is poised to maintain its leadership in the AI hardware market, driving unprecedented advancements in artificial intelligence and accelerated computing.