OpenAI thinks that AI systems with greater-than-human capabilities across all areas, known as superintelligence, could emerge within the next 10 years. Systems with such vast capabilities would bring astounding benefits but also pose potential risks if not developed carefully.

It is against this backdrop that OpenAI, in partnership with Eric Schmidt, has launched the "Superalignment Fast Grants," committing $10 million to research dedicated to the alignment and safety of these future superhuman AI systems. The grants range from $100,000 to $2 million for academic researchers, nonprofits and even individual engineers and scientists. There is also a $150,000 fellowship specifically aimed at supporting graduate students.

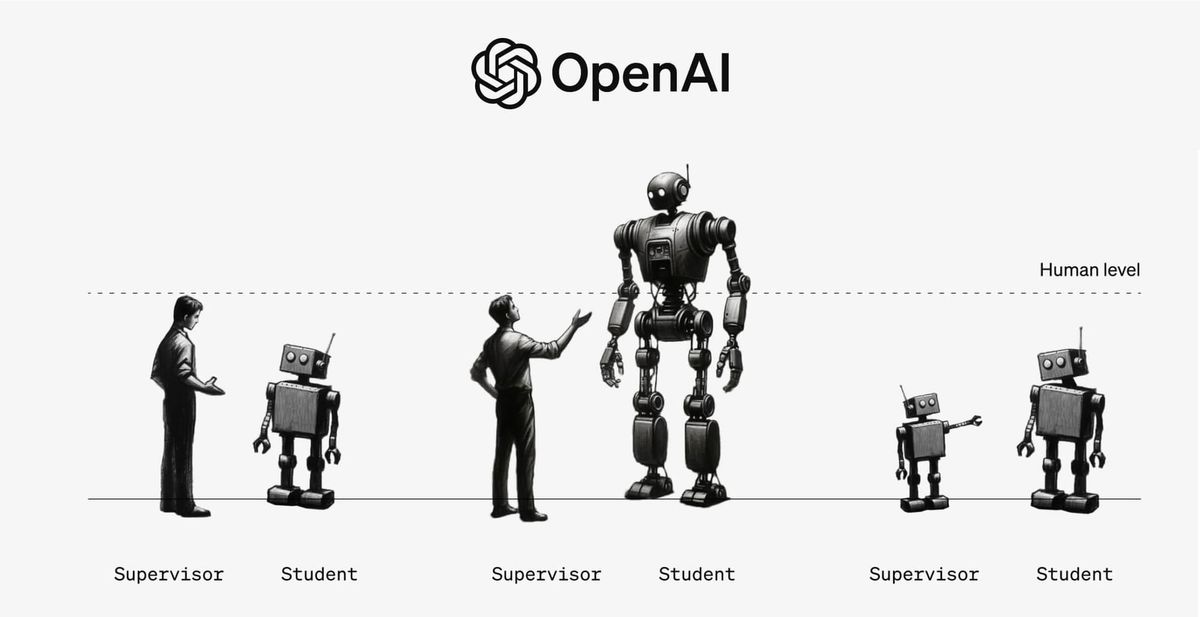

OpenAI researchers believe new techniques will be essential since humans will not be able to fully comprehend or oversee superhuman AI's complex behaviors. For example, an advanced AI system could generate millions of lines of code in a novel programming language that humans cannot easily evaluate as safe or dangerous. The fundamental research challenge is ensuring models much smarter than humans reliably follow operator intentions and avoid unintended harmful behaviors.

The grants will support research directions like weak-to-strong generalization, interpretability of model internals, AI-assisted human oversight of AI systems, honesty and faithfulness of model reasoning chains. The overarching goal is breakthroughs that enable controlling superhuman systems despite limited human comprehension of their intricate functioning.

With these generous new grants and vision of rallying top talent to work on one of technology's most important problems, OpenAI hopes to accelerate progress on ensuring advanced machines remain aligned to benefit humanity. The organization expressed optimism that dedication from researchers across fields could unlock transformational solutions well before advanced AI itself transforms society.