Stability AI expanded its language model offerings to the international market today with the release of Japanese StableLM Alpha, the best-performing openly available language model for Japanese speakers.

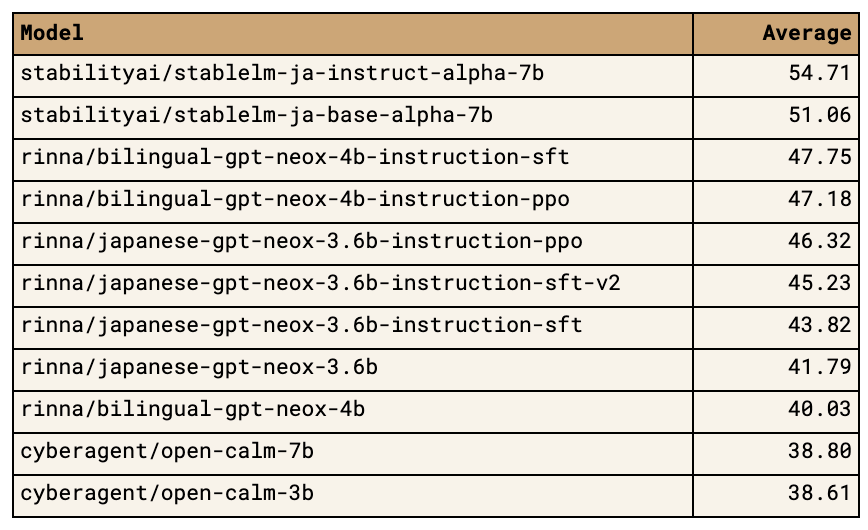

The new 7 billion parameter general-purpose language model aims to provide Japanese users with enhanced AI capabilities for text generation. Stability AI stated that Japanese StableLM outperformed four other benchmarked Japanese language models in assessments conducted by the company.

“We are proud of this first big step towards contributing to the Japanese generative AI ecosystem,” said Meng Lee, Project Lead for Japanese StableLM, in an announcement. “We look forward to continuing to create models across several modalities, built specifically to reflect Japanese culture, language and aesthetics.”

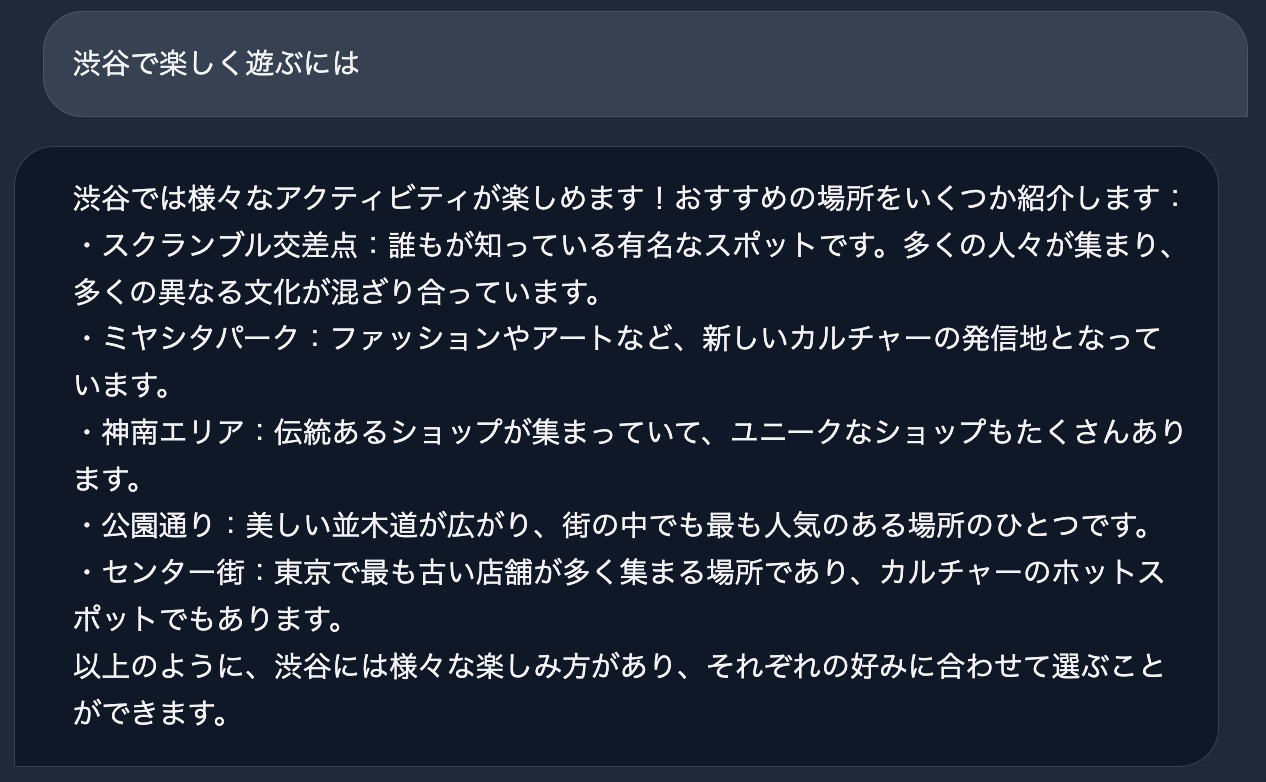

Two versions of the new model were released—Japanese StableLM Base Alpha 7B and Japanese StableLM Instruct Alpha 7B. The Base model is designed for general text generation using large-scale Japanese and English training data. The Instruct model incorporates additional tuning using supervised fine-tuning to follow user prompts and instructions.

In benchmark testing using EleutherAI's lm-evaluation-harness , the Instruct model achieved the top score among Japanese language models with an average score of 54.71 across eight assessment tasks.

Both models are now available on the Hugging Face Hub under open licenses, enabling users to test them for inference and further training. The Base model uses the Apache 2.0 license while the Instruct research model is for non-commercial research purposes only.

The launch provides Japanese AI developers and researchers with new generative models tailored to their language. It also marks Stability AI's first expansion beyond English language models like Stable Diffusion. The move signals potential future growth into other international markets as generative AI uptake increases globally.