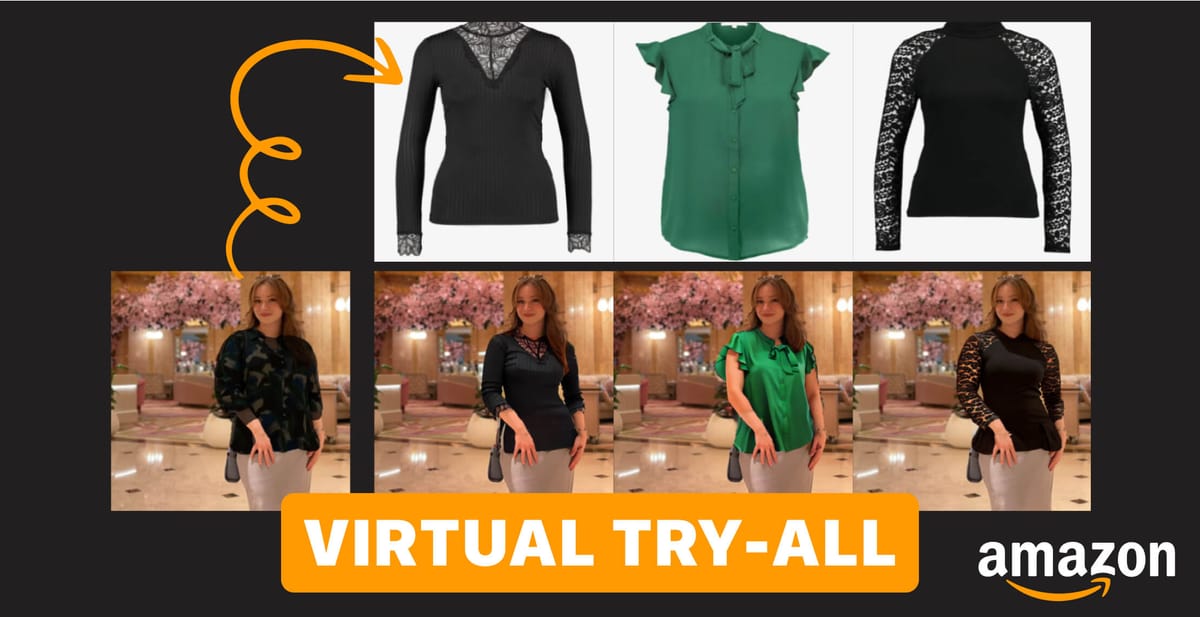

Shopping online can often be a hit or miss because it's not easy to visualize products before you buy. Amazon is hoping to change this with the introduction of Diffuse to Choose (DTC), a new AI system that allows you to virtually try products in any environment.

In a new paper published by Amazon researchers, DTC allows shoppers to seamlessly blend product images into their personal photos. This creates a “Virtual Try-All” experience where items appear realistically integrated into the desired scene or setting.

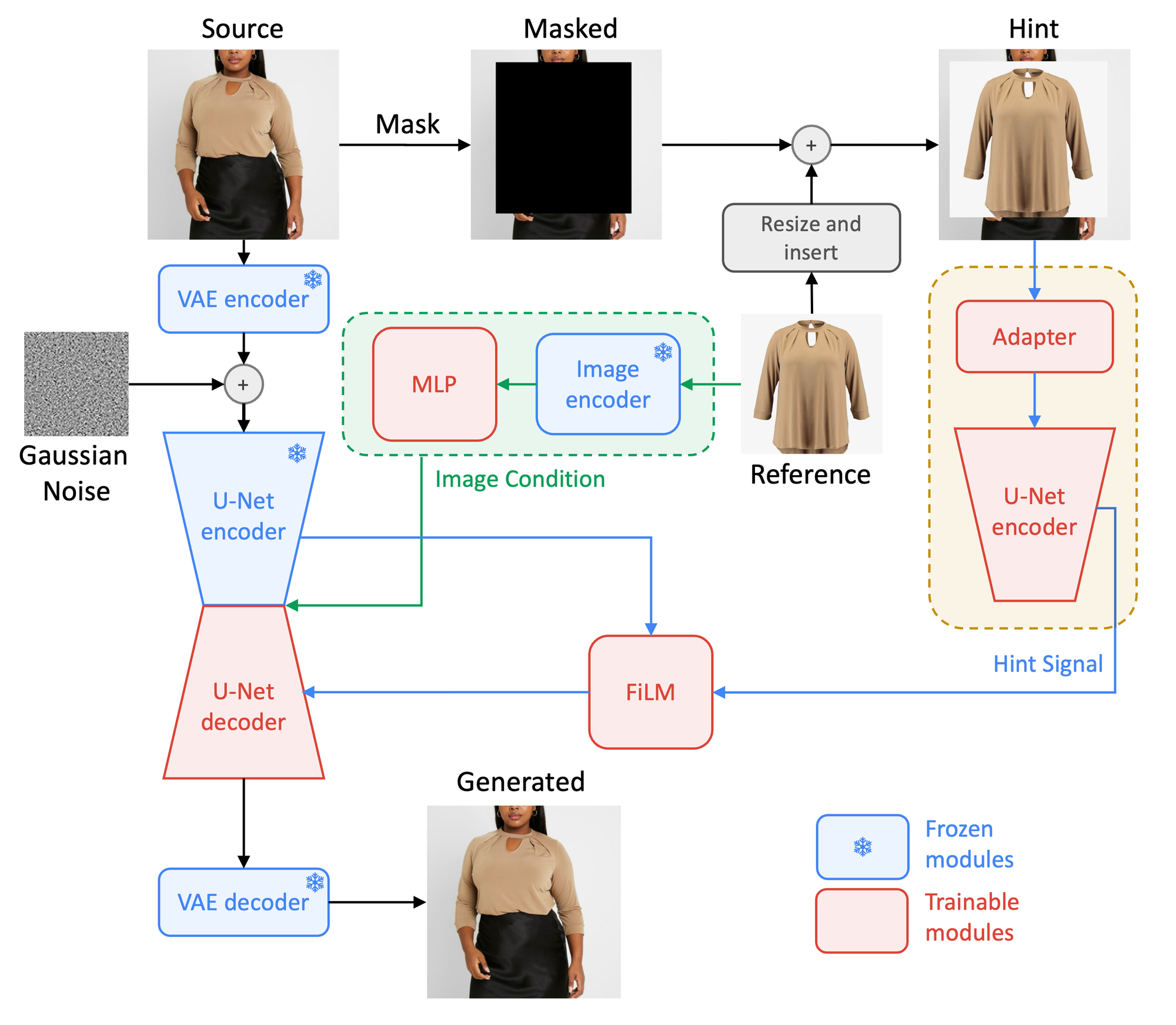

“Recent diffusion models inherently contain a world model, rendering them suitable for this task within an inpainting context,” the paper explains. “However, traditional image-conditioned diffusion models often fail to capture the fine-grained details of products.”

DTC addresses this by employing a novel diffusion-based image-conditioned inpainting model. This model uniquely balances fast inference with the retention of high-fidelity details, ensuring accurate semantic manipulations in any given scene. The use of a secondary U-Net Encoder to inject fine-grained details into the diffusion process is a key innovation here. This process involves masking the source image and inserting the reference image within the masked area, followed by a series of complex adaptations and alignments within the model.

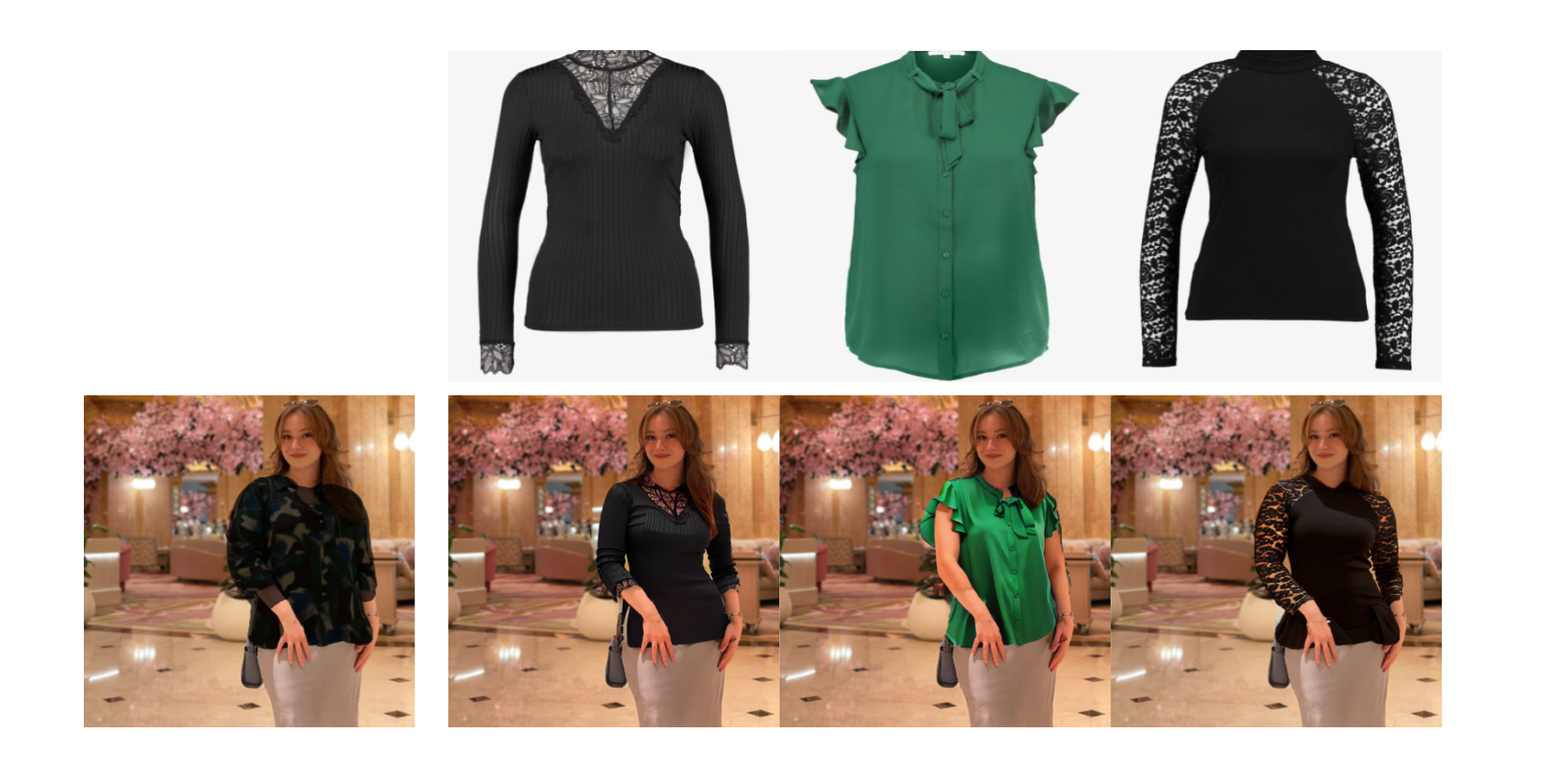

The paper validates DTC on both proprietary and public datasets, demonstrating quantitative and qualitative advantages over Paint By Example, a popular diffusion-based inpainting technique. Importantly, DTC matches few-shot personalization methods without requiring per-product fine-tuning.

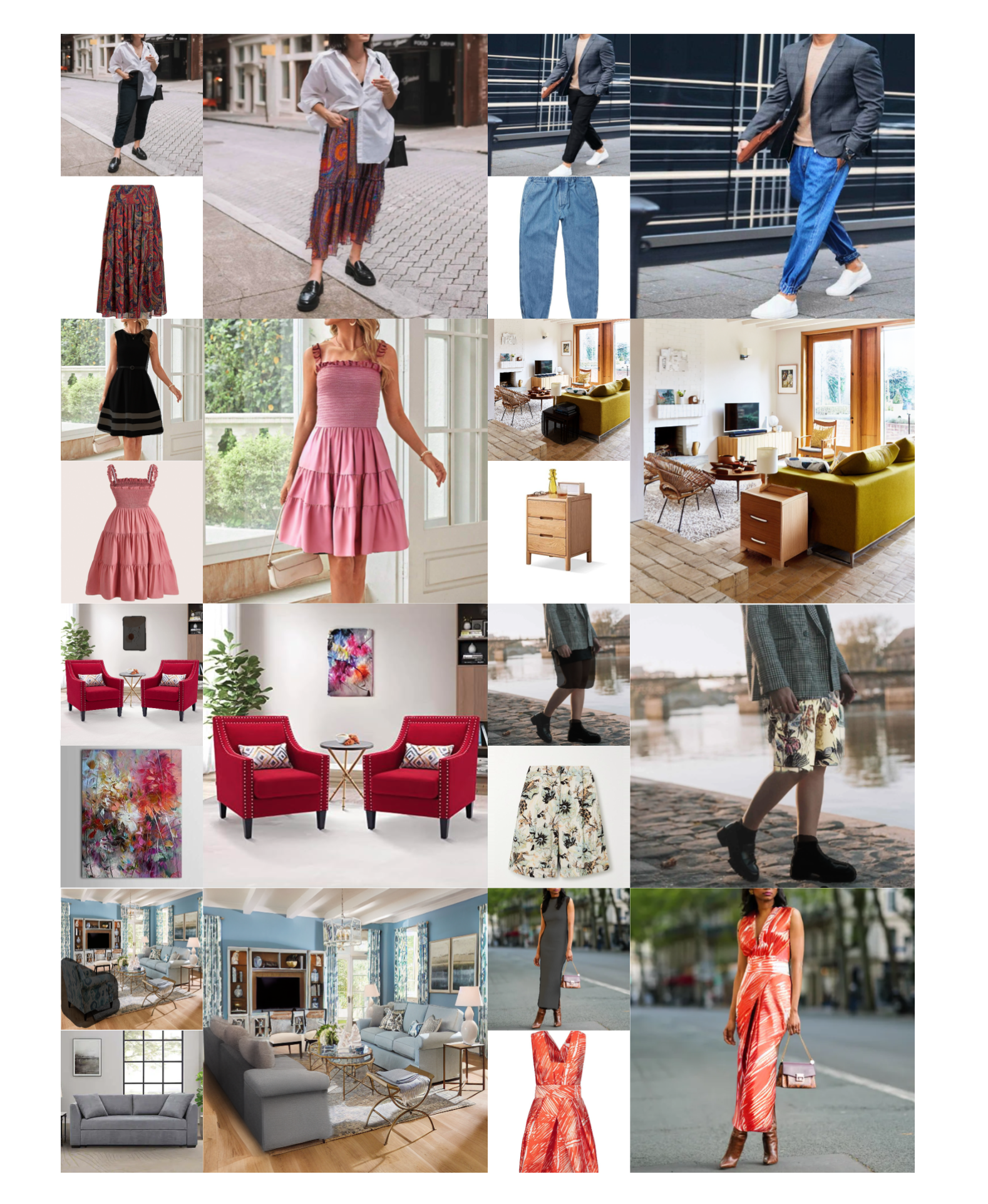

The practical implications of DTC are vast and varied. For consumers, it means the ability to place any item from an online store into their own living space or personal context, seeing how it fits and looks in real-time. This not only enhances the shopping experience but also aids in making more informed purchasing decisions. For retailers, it offers a new level of engagement with customers, potentially reducing return rates by providing a more accurate representation of products.

Early examples show DTC realistically blending a range of products, from clothing to furniture and decor items. The coherent lighting and shadows make items appear truly present in their inserted scenes.

DTC also enables iterative virtual decorating and outfit building. Users can adjust masks to alter the clothing style, such as tucking in clothes or rolling up sleeves.. This flexibility positions DTC as an engaging platform for personalized ecommerce experiences.

Amazon is not alone in exploring virtual try-on options. Last summer, we covered similar research from Google AI with their TryonDiffusion model.

While Google's research focused on primarily on improving realism for apparel, Amazon's DTC aims to work across product categories. Both highlight the increased interest in using AI for more immersive shopping experiences online.

Amazon says it will release the code and a demo soon. If the research lives up to its promises, integrating tools like DTC into the online shopping experience may spearhead the next evolution of ecommerce.