Alongside the release of their new Mixtral 8x7B model, Mistral AI has opened beta access to "La plateforme", providing developers with easy access to their top-performing generative and embedding models. The initial services demonstrate efficient deployment and customizability using Mistral's open models.

The platform launch includes three main services:

- Mistral-tiny: The most cost-effective endpoint, Mistral-tiny, operates on the upgraded

Mistral 7B Instruct v0.2base model with higher context length (now 32k) and achieving a score of 7.6 on MT-Bench. Currently, it supports only English and is designed for applications where cost efficiency is paramount. - Mistral-small: This endpoint utilizes the newly released

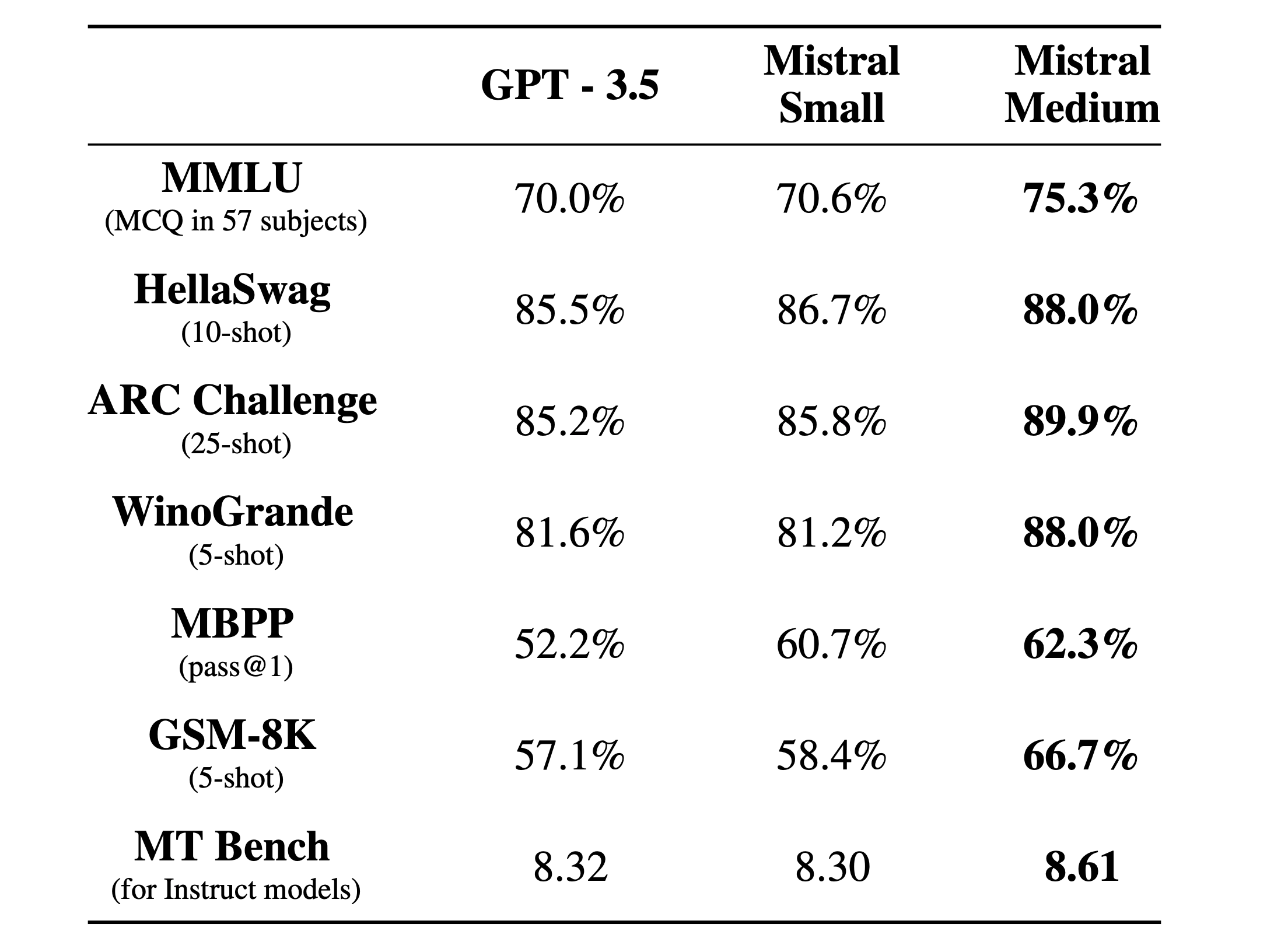

Mistral 8x7B Instruct v0.1model, proficient in five languages—English, French, Italian, German, and Spanish—and excels in code generation. With an MT-Bench score of 8.3, Mistral-small offers a balanced blend of performance and affordability. - Mistral-medium: Representing the pinnacle of quality in Mistral AI’s offerings, Mistral-medium uses a high-performance prototype model that outperforms GPT-3.5 on all metrics. It matches the language and code capabilities of Mistral-small but surpasses it with a higher MT-Bench score of 8.6, making it ideal for applications demanding the highest quality.

Additionally, Mistral AI introduces Mistral-embed, an embedding model with a 1024-dimension embedding, tailored for retrieval applications. It demonstrates robust performance with a 55.26 retrieval score on MTEB, catering to a wide range of data retrieval and analysis needs.

Mistral’s platform aims to empower developers with cutting-edge generative AI. The API follows chat interface standards popularized by other services to minimize migration barriers. Client libraries for Python and JavaScript are provided.

As Mistral moves from closed beta to general availability, they plan to continue expanding capacity and services. Interested users can sign up now for early access. Mistral's ultimate vision is enabling community access to original models that will drive the next wave of AI innovation. The launch of performant embedding and generative endpoints serves as the first step on that roadmap.