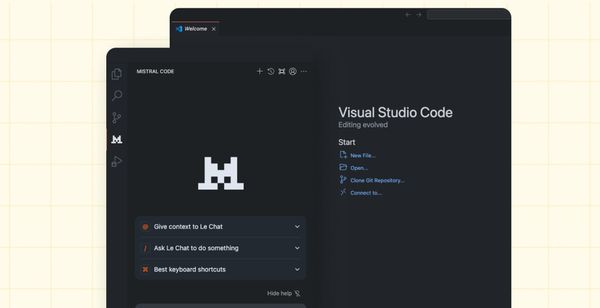

Mistral Launches All-in-One Coding Assistant, Mistral Code

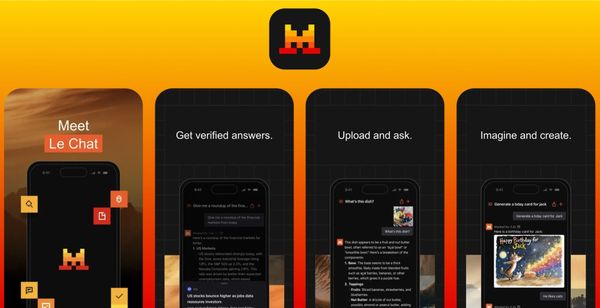

Mistral AI launched an enterprise-focused coding assistant that addresses security and compliance pain points that have kept many large companies from fully adopting AI development tools.

Get the latest AI updates from Maginative directly in your inbox.