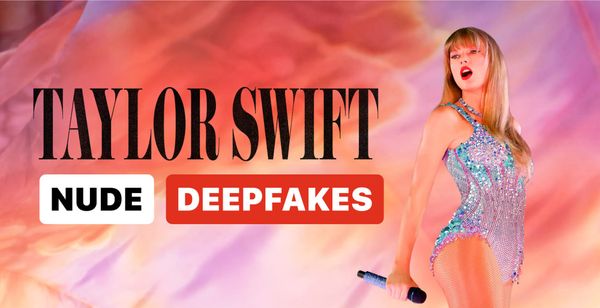

Trump Signs Bipartisan Law Criminalizing Deepfakes and Revenge Porn

President Trump has signed the bipartisan Take It Down Act into law, criminalizing the distribution of nonconsensual explicit images, including AI-generated deepfakes, and mandating swift removal by online platforms.