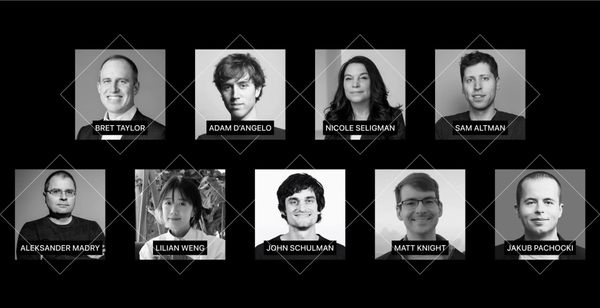

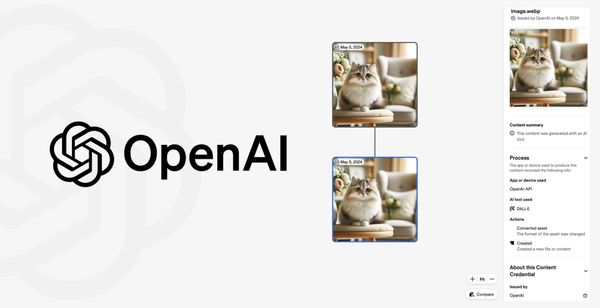

AI Whistleblowers Call for Increased Safety Oversight and Employee Protections

The letter makes a case for stronger whistleblower protections, arguing that current measures are insufficient to address the unique challenges posed by AI.

Get the latest AI updates from Maginative directly in your inbox.